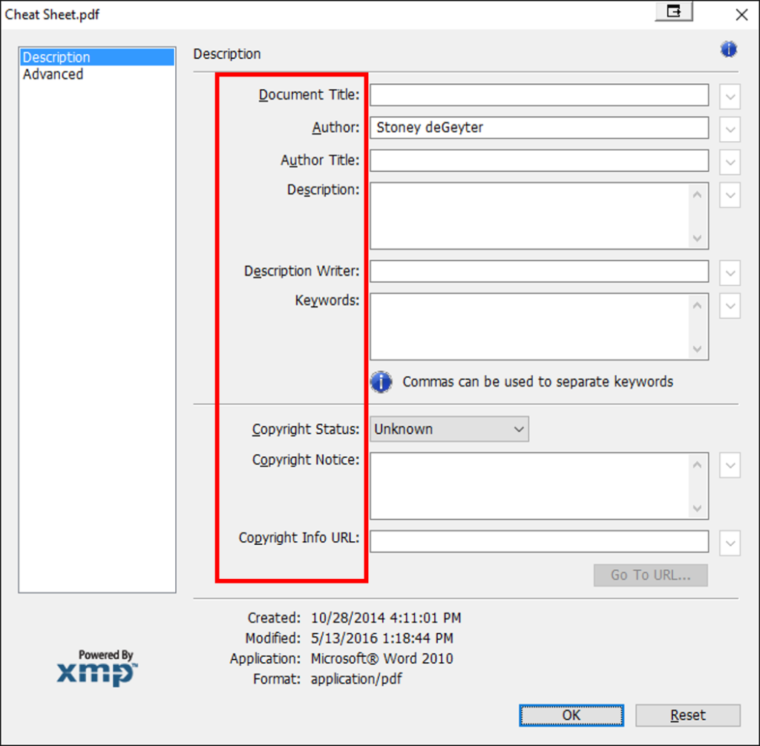

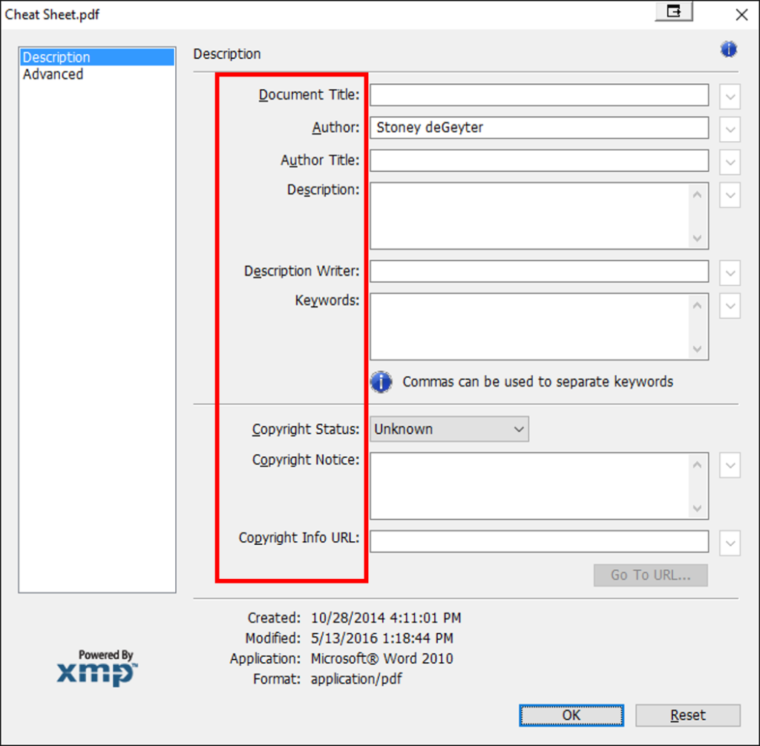

If you don’t have custom metadata setup, the only filters you will have are the ones that exist by default in any library (Modified, Modified By, etc.). Metadata Navigation is a feature in SharePoint that allows users to dynamically filter and find content in SharePoint lists and document libraries. Configure your metadata, upload. Adobe ® InDesign ® CS5 How-to guide Metadata: Where to find it, how to add it Metadata lets you add background information to your images, such as captions or copyright details. Follow these steps to access the search features of Adobe Acrobat and to find and replace text in a PDF, find text in multiple PDFs, review and save search results, and learn about search feature preferences.

-->

A. Find field B. Find Previous C. Find Next D. ReplaceWith expands to provide text field

- I have found that SharePoint Online search is not finding keywords that have been entered in to the properties of PDF documents. Is this by design and only the Author, Title and content is searchable and crawled? I am working with a customer that is adding the keywords in to the properties of the PDF when it is created.

- I have a lot of pdf files with metadata like title, subject, author and so on. But neither nautilus nor synapse nor gnome-do can find any files by their metadata. Is there any program or plugin to search by pdf-metadata? I know about the nautilus columns plugin for displaying title and author, but it doesn't allow you to search on them.

- PDF metadata standards. There are a number of standards for enriching PDF files with metadata. Below is a short summary: There are PDF substandards such as PDF/X and PDF/A that require the use of specific metadata. In a PDF/X-1a file, for example, there has to be a metadata field that describes whether the PDF file has been trapped or not.

This article shows how to use Azure Search to index documents (such as PDFs, Microsoft Office documents, and several other common formats) stored in Azure Blob storage. First, it explains the basics of setting up and configuring a blob indexer. Then, it offers a deeper exploration of behaviors and scenarios you are likely to encounter.

Supported document formats

The blob indexer can extract text from the following document formats:

- Microsoft Office formats: DOCX/DOC/DOCM, XLSX/XLS/XLSM, PPTX/PPT/PPTM, MSG (Outlook emails), XML(both 2003 and 2006 WORD XML)

- Open Document formats: ODT, ODS, ODP

- HTML

- XML

- ZIP

- GZ

- EPUB

- EML

- RTF

- Plain text files (see also Indexing plain text)

- JSON (see Indexing JSON blobs)

- CSV (see Indexing CSV blobs preview feature)

Setting up blob indexing

You can set up an Azure Blob Storage indexer using:

- Azure Search REST API

- Azure Search .NET SDK

Note

Some features (for example, field mappings) are not yet available in the portal, and have to be used programmatically.

Here, we demonstrate the flow using the REST API.

Step 1: Create a data source

A data source specifies which data to index, credentials needed to access the data, and policies to efficiently identify changes in the data (new, modified, or deleted rows). A data source can be used by multiple indexers in the same search service.

For blob indexing, the data source must have the following required properties:

- name is the unique name of the data source within your search service.

- type must be

azureblob. - credentials provides the storage account connection string as the

credentials.connectionStringparameter. See How to specify credentials below for details. - container specifies a container in your storage account. By default, all blobs within the container are retrievable. If you only want to index blobs in a particular virtual directory, you can specify that directory using the optional query parameter.

To create a data source:

For more on the Create Datasource API, see Create Datasource.

How to specify credentials

You can provide the credentials for the blob container in one of these ways:

- Full access storage account connection string:

DefaultEndpointsProtocol=https;AccountName=<your storage account>;AccountKey=<your account key>You can get the connection string from the Azure portal by navigating to the storage account blade > Settings > Keys (for Classic storage accounts) or Settings > Access keys (for Azure Resource Manager storage accounts). - Storage account shared access signature (SAS) connection string:

BlobEndpoint=https://<your account>.blob.core.windows.net/;SharedAccessSignature=?sv=2016-05-31&sig=<the signature>&spr=https&se=<the validity end time>&srt=co&ss=b&sp=rlThe SAS should have the list and read permissions on containers and objects (blobs in this case). - Container shared access signature:

ContainerSharedAccessUri=https://<your storage account>.blob.core.windows.net/<container name>?sv=2016-05-31&sr=c&sig=<the signature>&se=<the validity end time>&sp=rlThe SAS should have the list and read permissions on the container.

For more info on storage shared access signatures, see Using Shared Access Signatures.

Note

If you use SAS credentials, you will need to update the data source credentials periodically with renewed signatures to prevent their expiration. If SAS credentials expire, the indexer will fail with an error message similar to

Credentials provided in the connection string are invalid or have expired..

Step 2: Create an index

The index specifies the fields in a document, attributes, and other constructs that shape the search experience.

Here's how to create an index with a searchable

content field to store the text extracted from blobs:

For more on creating indexes, see Create Index

Step 3: Create an indexer

An indexer connects a data source with a target search index, and provides a schedule to automate the data refresh.

Once the index and data source have been created, you're ready to create the indexer:

This indexer will run every two hours (schedule interval is set to 'PT2H'). To run an indexer every 30 minutes, set the interval to 'PT30M'. The shortest supported interval is 5 minutes. The schedule is optional - if omitted, an indexer runs only once when it's created. However, you can run an indexer on-demand at any time.

For more details on the Create Indexer API, check out Create Indexer.

For more information about defining indexer schedules see How to schedule indexers for Azure Search.

How Azure Search indexes blobs

Depending on the indexer configuration, the blob indexer can index storage metadata only (useful when you only care about the metadata and don't need to index the content of blobs), storage and content metadata, or both metadata and textual content. By default, the indexer extracts both metadata and content.

Note

By default, blobs with structured content such as JSON or CSV are indexed as a single chunk of text. If you want to index JSON and CSV blobs in a structured way, see Indexing JSON blobs and Indexing CSV blobs for more information.

A compound or embedded document (such as a ZIP archive or a Word document with embedded Outlook email containing attachments) is also indexed as a single document.

- The textual content of the document is extracted into a string field named

content.

Note

Azure Search limits how much text it extracts depending on the pricing tier: 32,000 characters for Free tier, 64,000 for Basic, and 4 million for Standard, Standard S2 and Standard S3 tiers. A warning is included in the indexer status response for truncated documents.

-

User-specified metadata properties present on the blob, if any, are extracted verbatim.

-

Standard blob metadata properties are extracted into the following fields:

- metadata_storage_name (Edm.String) - the file name of the blob. For example, if you have a blob /my-container/my-folder/subfolder/resume.pdf, the value of this field is

resume.pdf. - metadata_storage_path (Edm.String) - the full URI of the blob, including the storage account. For example,

https://myaccount.blob.core.windows.net/my-container/my-folder/subfolder/resume.pdf - metadata_storage_content_type (Edm.String) - content type as specified by the code you used to upload the blob. For example,

application/octet-stream. - metadata_storage_last_modified (Edm.DateTimeOffset) - last modified timestamp for the blob. Azure Search uses this timestamp to identify changed blobs, to avoid reindexing everything after the initial indexing.

- metadata_storage_size (Edm.Int64) - blob size in bytes.

- metadata_storage_content_md5 (Edm.String) - MD5 hash of the blob content, if available.

- metadata_storage_sas_token (Edm.String) - A temporary SAS token that can be used by custom skills to get access to the blob. This token should not be stored for later use as it might expire.

- metadata_storage_name (Edm.String) - the file name of the blob. For example, if you have a blob /my-container/my-folder/subfolder/resume.pdf, the value of this field is

-

Metadata properties specific to each document format are extracted into the fields listed here.

You don't need to define fields for all of the above properties in your search index - just capture the properties you need for your application.

Note

Often, the field names in your existing index will be different from the field names generated during document extraction. You can use field mappings to map the property names provided by Azure Search to the field names in your search index. You will see an example of field mappings use below.

Defining document keys and field mappings

In Azure Search, the document key uniquely identifies a document. Every search index must have exactly one key field of type Edm.String. The key field is required for each document that is being added to the index (it is actually the only required field).

You should carefully consider which extracted field should map to the key field for your index. The candidates are:

- metadata_storage_name - this might be a convenient candidate, but note that 1) the names might not be unique, as you may have blobs with the same name in different folders, and 2) the name may contain characters that are invalid in document keys, such as dashes. You can deal with invalid characters by using the

base64Encodefield mapping function - if you do this, remember to encode document keys when passing them in API calls such as Lookup. (For example, in .NET you can use the UrlTokenEncode method for that purpose). - metadata_storage_path - using the full path ensures uniqueness, but the path definitely contains

/characters that are invalid in a document key. As above, you have the option of encoding the keys using thebase64Encodefunction. - If none of the options above work for you, you can add a custom metadata property to the blobs. This option does, however, require your blob upload process to add that metadata property to all blobs. Since the key is a required property, all blobs that don't have that property will fail to be indexed.

Important

If there is no explicit mapping for the key field in the index, Azure Search automatically uses

metadata_storage_path as the key and base-64 encodes key values (the second option above).

For this example, let's pick the

metadata_storage_name field as the document key. Let's also assume your index has a key field named key and a field fileSize for storing the document size. To wire things up as desired, specify the following field mappings when creating or updating your indexer:

To bring this all together, here's how you can add field mappings and enable base-64 encoding of keys for an existing indexer:

Note

To learn more about field mappings, see this article.

Controlling which blobs are indexed

You can control which blobs are indexed, and which are skipped.

Index only the blobs with specific file extensions

You can index only the blobs with the file name extensions you specify by using the

indexedFileNameExtensions indexer configuration parameter. The value is a string containing a comma-separated list of file extensions (with a leading dot). For example, to index only the .PDF and .DOCX blobs, do this:

Exclude blobs with specific file extensions

You can exclude blobs with specific file name extensions from indexing by using the

excludedFileNameExtensions configuration parameter. The value is a string containing a comma-separated list of file extensions (with a leading dot). For example, to index all blobs except those with the .PNG and .JPEG extensions, do this:

If both

indexedFileNameExtensions and excludedFileNameExtensions parameters are present, Azure Search first looks at indexedFileNameExtensions, then at excludedFileNameExtensions. This means that if the same file extension is present in both lists, it will be excluded from indexing.

Controlling which parts of the blob are indexed

You can control which parts of the blobs are indexed using the

dataToExtract configuration parameter. It can take the following values:

storageMetadata- specifies that only the standard blob properties and user-specified metadata are indexed.allMetadata- specifies that storage metadata and the content-type specific metadata extracted from the blob content are indexed.contentAndMetadata- specifies that all metadata and textual content extracted from the blob are indexed. This is the default value.

For example, to index only the storage metadata, use:

Using blob metadata to control how blobs are indexed

The configuration parameters described above apply to all blobs. Sometimes, you may want to control how individual blobs are indexed. You can do this by adding the following blob metadata properties and values:

| Property name | Property value | Explanation |

|---|---|---|

| AzureSearch_Skip | 'true' | Instructs the blob indexer to completely skip the blob. Neither metadata nor content extraction is attempted. This is useful when a particular blob fails repeatedly and interrupts the indexing process. |

| AzureSearch_SkipContent | 'true' | This is equivalent of 'dataToExtract' : 'allMetadata' setting described above scoped to a particular blob. |

Dealing with errors

By default, the blob indexer stops as soon as it encounters a blob with an unsupported content type (for example, an image). You can of course use the

excludedFileNameExtensions parameter to skip certain content types. However, you may need to index blobs without knowing all the possible content types in advance. To continue indexing when an unsupported content type is encountered, set the failOnUnsupportedContentType configuration parameter to false:

For some blobs, Azure Search is unable to determine the content type, or unable to process a document of otherwise supported content type. To ignore this failure mode, set the

failOnUnprocessableDocument configuration parameter to false:

Azure Search limits the size of blobs that are indexed. These limits are documented in Service Limits in Azure Search. Oversized blobs are treated as errors by default. However, you can still index storage metadata of oversized blobs if you set

indexStorageMetadataOnlyForOversizedDocuments configuration parameter to true:

You can also continue indexing if errors happen at any point of processing, either while parsing blobs or while adding documents to an index. To ignore a specific number of errors, set the

maxFailedItems and maxFailedItemsPerBatch configuration parameters to the desired values. For example:

Incremental indexing and deletion detection

When you set up a blob indexer to run on a schedule, it reindexes only the changed blobs, as determined by the blob's

LastModified timestamp.

Note

You don't have to specify a change detection policy – incremental indexing is enabled for you automatically.

To support deleting documents, use a 'soft delete' approach. If you delete the blobs outright, corresponding documents will not be removed from the search index. Instead, use the following steps:

- Add a custom metadata property to the blob to indicate to Azure Search that it is logically deleted

- Configure a soft deletion detection policy on the data source

- Once the indexer has processed the blob (as shown by the indexer status API), you can physically delete the blob

For example, the following policy considers a blob to be deleted if it has a metadata property

IsDeleted with the value true:

Indexing large datasets

Indexing blobs can be a time-consuming process. In cases where you have millions of blobs to index, you can speed up indexing by partitioning your data and using multiple indexers to process the data in parallel. Here's how you can set this up:

-

Partition your data into multiple blob containers or virtual folders

-

Set up several Azure Search data sources, one per container or folder. To point to a blob folder, use the

queryparameter: -

Create a corresponding indexer for each data source. All the indexers can point to the same target search index.

-

One search unit in your service can run one indexer at any given time. Creating multiple indexers as described above is only useful if they actually run in parallel. To run multiple indexers in parallel, scale out your search service by creating an appropriate number of partitions and replicas. For example, if your search service has 6 search units (for example, 2 partitions x 3 replicas), then 6 indexers can run simultaneously, resulting in a six-fold increase in the indexing throughput. To learn more about scaling and capacity planning, see Scale resource levels for query and indexing workloads in Azure Search.

Indexing documents along with related data

You may want to 'assemble' documents from multiple sources in your index. For example, you may want to merge text from blobs with other metadata stored in Cosmos DB. You can even use the push indexing API together with various indexers to build up search documents from multiple parts.

For this to work, all indexers and other components need to agree on the document key. For additional details on this topic, refer to Index multiple Azure data sources. For a detailed walk-through, see this external article: Combine documents with other data in Azure Search.

Indexing plain text

If all your blobs contain plain text in the same encoding, you can significantly improve indexing performance by using text parsing mode. To use text parsing mode, set the

parsingMode configuration property to text:

By default, the

UTF-8 encoding is assumed. To specify a different encoding, use the encoding configuration property:

Content type-specific metadata properties

The following table summarizes processing done for each document format, and describes the metadata properties extracted by Azure Search.

| Document format / content type | Content-type specific metadata properties | Processing details |

|---|---|---|

| HTML (text/html) | metadata_content_encodingmetadata_content_typemetadata_languagemetadata_descriptionmetadata_keywordsmetadata_title |

Strip HTML markup and extract text |

| PDF (application/pdf) | metadata_content_typemetadata_languagemetadata_authormetadata_title |

Extract text, including embedded documents (excluding images) |

| DOCX (application/vnd.openxmlformats-officedocument.wordprocessingml.document) | metadata_content_typemetadata_authormetadata_character_countmetadata_creation_datemetadata_last_modifiedmetadata_page_countmetadata_word_count |

Extract text, including embedded documents |

| DOC (application/msword) | metadata_content_typemetadata_authormetadata_character_countmetadata_creation_datemetadata_last_modifiedmetadata_page_countmetadata_word_count |

Extract text, including embedded documents |

| DOCM (application/vnd.ms-word.document.macroenabled.12) | metadata_content_typemetadata_authormetadata_character_countmetadata_creation_datemetadata_last_modifiedmetadata_page_countmetadata_word_count |

Extract text, including embedded documents |

| WORD XML (application/vnd.ms-word2006ml) | metadata_content_typemetadata_authormetadata_character_countmetadata_creation_datemetadata_last_modifiedmetadata_page_countmetadata_word_count |

Strip XML markup and extract text |

| WORD 2003 XML (application/vnd.ms-wordml) | metadata_content_typemetadata_authormetadata_creation_date |

Strip XML markup and extract text |

| XLSX (application/vnd.openxmlformats-officedocument.spreadsheetml.sheet) | metadata_content_typemetadata_authormetadata_creation_datemetadata_last_modified |

Extract text, including embedded documents |

| XLS (application/vnd.ms-excel) | metadata_content_typemetadata_authormetadata_creation_datemetadata_last_modified |

Extract text, including embedded documents |

| XLSM (application/vnd.ms-excel.sheet.macroenabled.12) | metadata_content_typemetadata_authormetadata_creation_datemetadata_last_modified |

Extract text, including embedded documents |

| PPTX (application/vnd.openxmlformats-officedocument.presentationml.presentation) | metadata_content_typemetadata_authormetadata_creation_datemetadata_last_modifiedmetadata_slide_countmetadata_title |

Extract text, including embedded documents |

| PPT (application/vnd.ms-powerpoint) | metadata_content_typemetadata_authormetadata_creation_datemetadata_last_modifiedmetadata_slide_countmetadata_title |

Extract text, including embedded documents |

| PPTM (application/vnd.ms-powerpoint.presentation.macroenabled.12) | metadata_content_typemetadata_authormetadata_creation_datemetadata_last_modifiedmetadata_slide_countmetadata_title |

Extract text, including embedded documents |

| MSG (application/vnd.ms-outlook) | metadata_content_typemetadata_message_frommetadata_message_from_emailmetadata_message_tometadata_message_to_emailmetadata_message_ccmetadata_message_cc_emailmetadata_message_bccmetadata_message_bcc_emailmetadata_creation_datemetadata_last_modifiedmetadata_subject |

Extract text, including attachments |

| ODT (application/vnd.oasis.opendocument.text) | metadata_content_typemetadata_authormetadata_character_countmetadata_creation_datemetadata_last_modifiedmetadata_page_countmetadata_word_count |

Extract text, including embedded documents |

| ODS (application/vnd.oasis.opendocument.spreadsheet) | metadata_content_typemetadata_authormetadata_creation_datemetadata_last_modified |

Extract text, including embedded documents |

| ODP (application/vnd.oasis.opendocument.presentation) | metadata_content_typemetadata_authormetadata_creation_datemetadata_last_modifiedtitle |

Extract text, including embedded documents |

| ZIP (application/zip) | metadata_content_type |

Extract text from all documents in the archive |

| GZ (application/gzip) | metadata_content_type |

Extract text from all documents in the archive |

| EPUB (application/epub+zip) | metadata_content_typemetadata_authormetadata_creation_datemetadata_titlemetadata_descriptionmetadata_languagemetadata_keywordsmetadata_identifiermetadata_publisher |

Extract text from all documents in the archive |

| XML (application/xml) | metadata_content_typemetadata_content_encoding |

Strip XML markup and extract text |

| JSON (application/json) | metadata_content_typemetadata_content_encoding |

Extract text NOTE: If you need to extract multiple document fields from a JSON blob, see Indexing JSON blobs for details |

| EML (message/rfc822) | metadata_content_typemetadata_message_frommetadata_message_tometadata_message_ccmetadata_creation_datemetadata_subject |

Extract text, including attachments |

| RTF (application/rtf) | metadata_content_typemetadata_authormetadata_character_countmetadata_creation_datemetadata_page_countmetadata_word_count |

Extract text |

| Plain text (text/plain) | metadata_content_typemetadata_content_encoding |

Extract text |

Help us make Azure Search better

If you have feature requests or ideas for improvements, let us know on our UserVoice site.

You have lots of control and lots of possibilities for running effective and efficient searches in Adobe Acrobat. A search can be broad or narrow, including many different kinds of data and covering multiple Adobe PDFs.

If you work with large numbers of related PDFs, you can define them as a catalog in Acrobat Pro, which generates a PDF index for the PDFs. Searching the PDF index—instead of the PDFs themselves—dramatically speeds up searches. See Creating PDF indexes.

You run searches to find specific items in PDFs. Youcan run a simple search, looking for a search term within in a singlefile, or you can run a more complex search, looking for variouskinds of data in one or more PDFs. You can selectively replace text.

You can run a search using either theSearch window or the Find toolbar. In either case, Acrobat searches the PDF body text, layers,form fields, and digital signatures. You can also include bookmarksand comments in the search. Only the Find toolbar includes a ReplaceWith option.

When you type the first few letters to search in a PDF, Acrobat provides suggestions for the matching word and its frequency of occurrence in the document. When you select the word, Acrobat highlights all the matching results in the PDF.

The Search window offersmore options and more kinds of searches than the Find toolbar. Whenyou use the Search window, object data and image XIF (extended imagefile format) metadata are also searched. For searches across multiplePDFs, Acrobat also looks at documentproperties and XMP metadata, and it searches indexed structure tagswhen searching a PDF index. If some of the PDFs you search haveattached PDFs, you can include the attachments in the search.

Note:

PDFs canhave multiple layers. If the search results include an occurrenceon a hidden layer, selecting that occurrence displays an alert thatasks if you want to make that layer visible.

Where you start your search depends on thetype of search you want to run. Use the Find toolbar for a quicksearch of the current PDF and to replace text. Use the Search windowto look for words or document properties across multiple PDFs, useadvanced search options, and search PDF indexes.

A. Find field B. Find Previous C. Find Next D. ReplaceWith expands to provide text field

-

-

Choose Edit > AdvancedSearch (Shift+Ctrl/Command+F).

-

On the Find toolbar, click the arrow andchoose Open Full Acrobat Search.

Search appears as a separate window that you canmove, resize, minimize, or arrange partially or completely behindthe PDF window. -

Arrange the PDF document windowand Search window

-

Acrobat resizes and arranges the two windows side by side so that together they almost fill the entire screen.Note: Clicking the Arrange Windows button a second time resizes the document window but leaves the Search window unchanged. If you want to make the Search window larger or smaller, drag the corner or edge, as you would to resize any window on your operating system.

The Find toolbar searches the currently openPDF. You can selectively replace the search term with alternativetext. You replace text one instance at a time. You cannot make aglobal change throughout a PDF or across multiple PDFs.

-

Type the text you want to search for in the text boxon the Find toolbar.

-

To replace text, click Replace With toexpand the toolbar, then type the replacement text in the ReplaceWith text box.

-

(Optional) Click the arrow nextto the text box and choose one or more of the following:

Includes the basic search options plus five additional options:

Use These Additional Criteria (documentproperties)

Appears only for searches across multiple PDFs or PDF indexes. You can select multiple property-modifier-value combinations and apply them to searches. This setting does not apply to non-PDF files inside PDF Portfolios.

Note: You can search by document properties alone by using document property options in combination with a search for specific text.

Shows the additional options available in the Search window,in addition to the basic options.

RSS Feed

RSS Feed